Simulation Experiments on (the Absence of) Ratings Bias in Reputation Systems

By Jacob Thebault-Spieker on

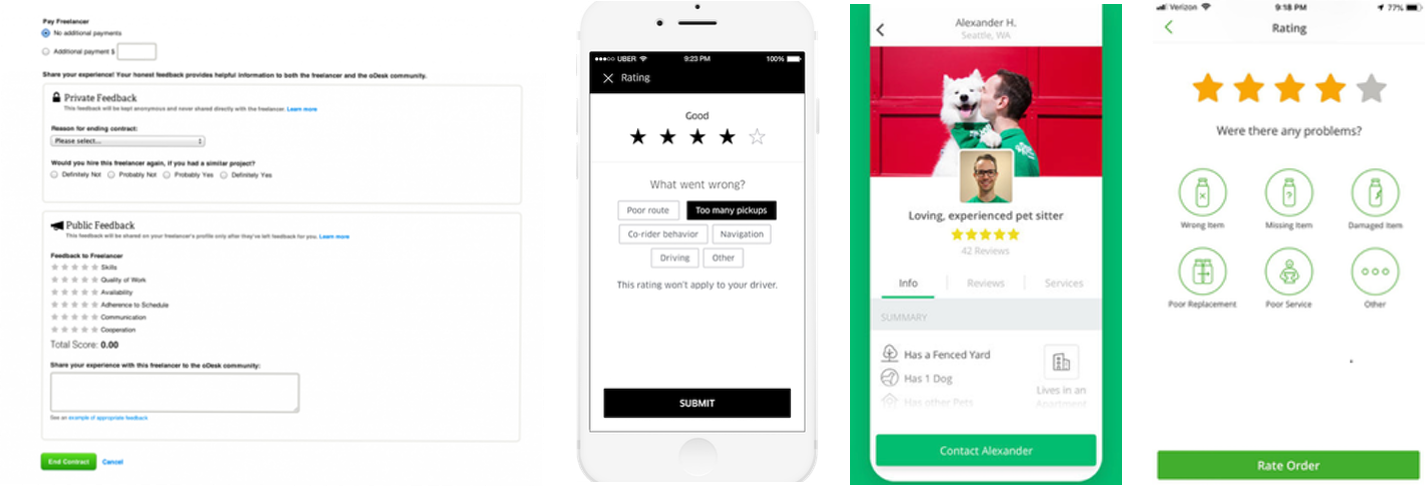

It seems like every day there is a new gig work platform (e.g. UpWork, Uber, Airbnb, or Rover) that uses a 5-star scale to rate workers. This helps workers build reputation and develop the trust necessary for gig work interactions, but there is a big concern: lots of prior work finds that race and gender biases occur when people evaluate each other. In an upcoming paper at the 2018 ACM CSCW conference, we describe what we thought would be a straightforward study of race and gender biases in 5-star reputation systems. However, it turned into an exercise in repeated experimentation to verify surprising results and careful statistical analysis to better understand our findings. Ultimately, we ended up with a future research agenda composed of compelling new hypotheses about race, gender and five-star rating scales. (more…)

Hannah’s proposal focused on strengthening a social network user’s experience by bootstrapping from previously established social network presence.

Hannah’s proposal focused on strengthening a social network user’s experience by bootstrapping from previously established social network presence.