Featured Research

We publish research articles in conferences and journals primarily in the field of computer science, but also in other fields including psychology, sociology, and medicine. See our blog for research highlights and our publications page for a comprehensive view of our research contributions. Here are excerpts from recent articles:

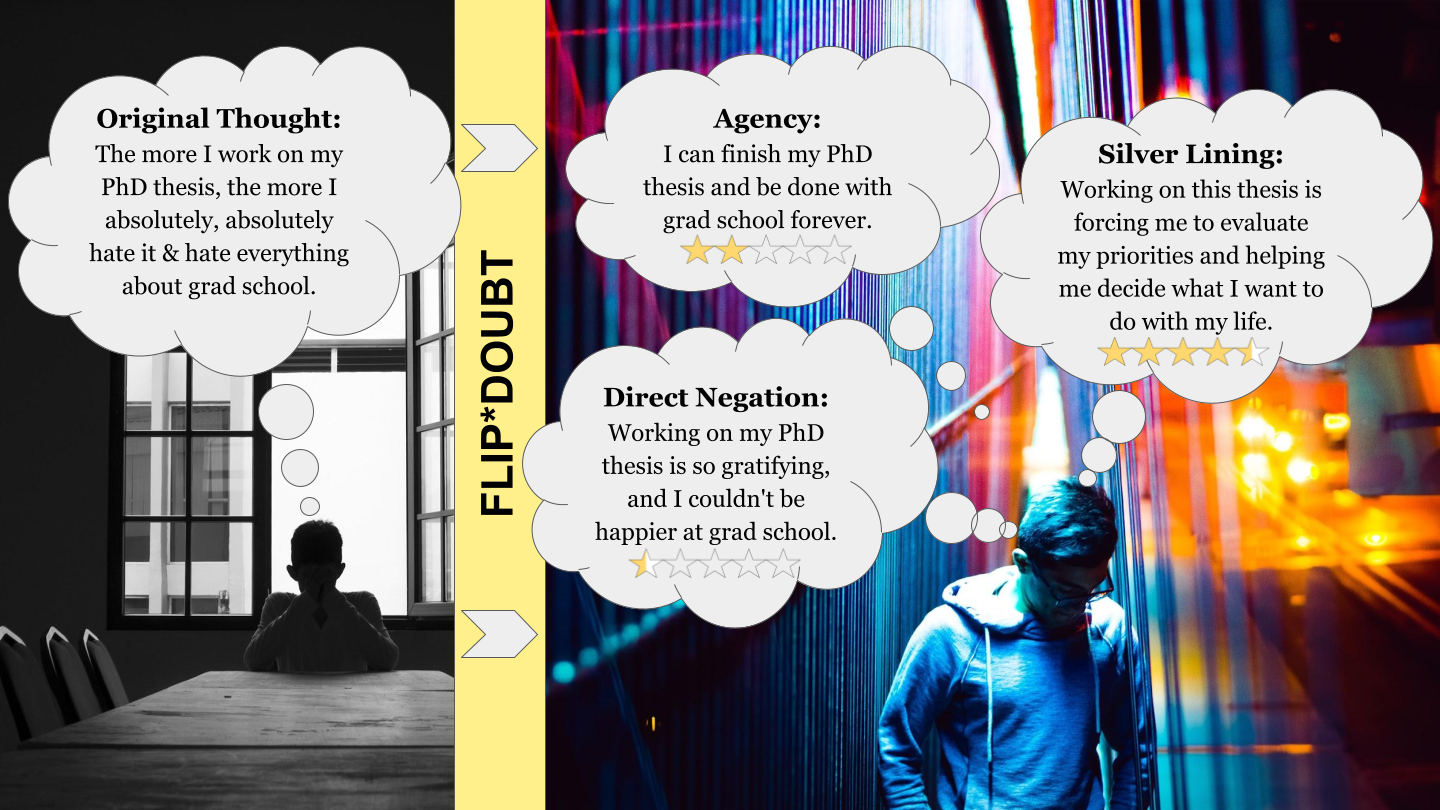

Rethinking Mental Health Interventions: How Crowd-Powered Therapy Can Help Everyone Help Everyone

Rates of mental illness continue to rise every year. Yet there are nowhere near enough trained mental health professionals available to meet the need. How can technology create new ways to expand models of delivery for clinically validated therapeutic techniques? Read this blog on our deployment of the Flip*Doubt prototype–an Honorable Mention #CSCW2021 paper. More…

Rates of mental illness continue to rise every year. Yet there are nowhere near enough trained mental health professionals available to meet the need. How can technology create new ways to expand models of delivery for clinically validated therapeutic techniques? Read this blog on our deployment of the Flip*Doubt prototype–an Honorable Mention #CSCW2021 paper. More…

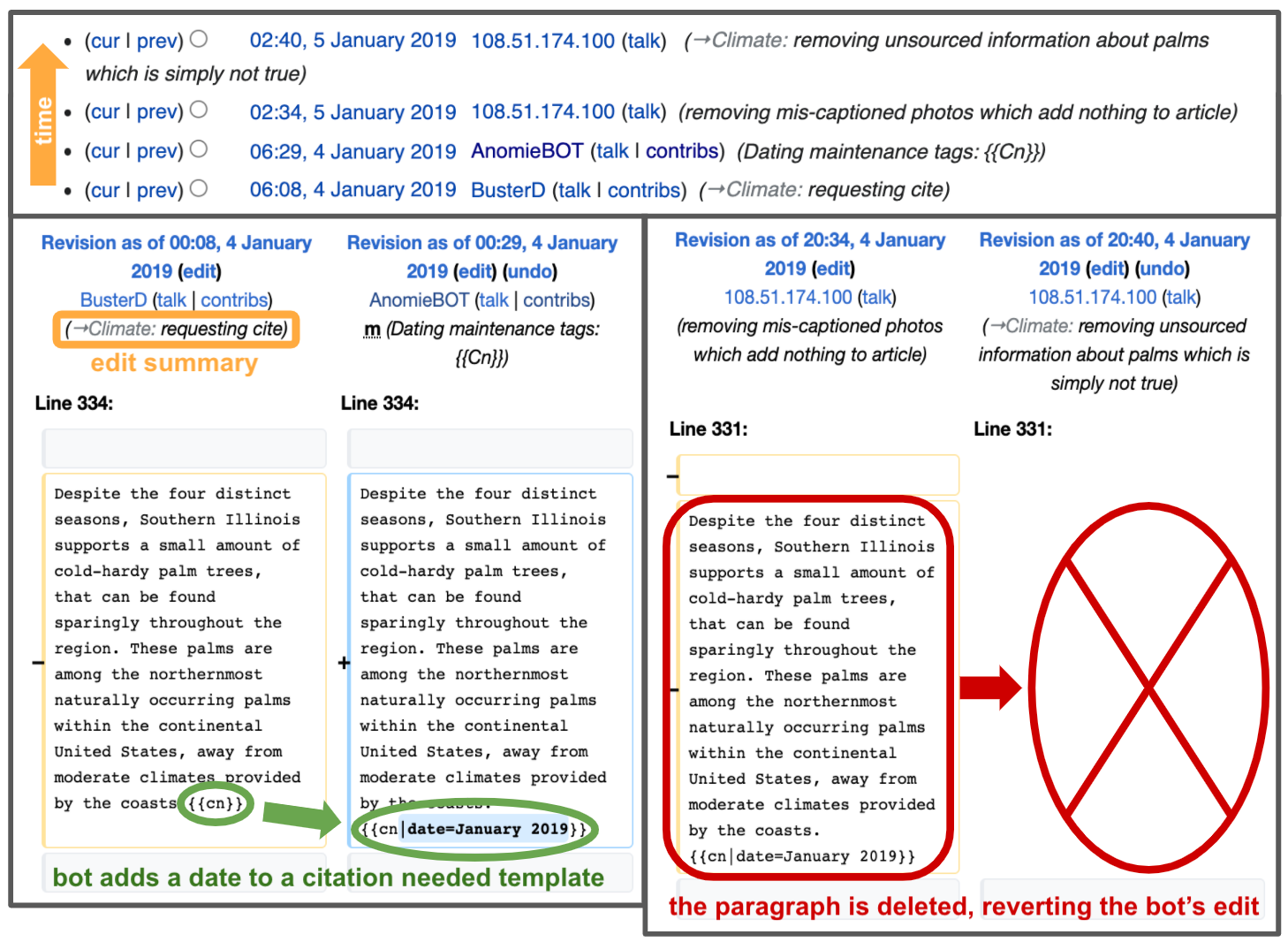

Are Bots Ravaging Online Encyclopedias?

Wikipedia is the online encyclopedia that anyone can edit. However, you probably didn’t know that “bots” also edit Wikipedia! Read this blog about conflict among bots—from the University of Minnesota’s “human-centered computing for social good” REU. More…

Wikipedia is the online encyclopedia that anyone can edit. However, you probably didn’t know that “bots” also edit Wikipedia! Read this blog about conflict among bots—from the University of Minnesota’s “human-centered computing for social good” REU. More…

Featured Projects

We build and study real systems, going back to the release of MovieLens in 1997. See our projects page for a full list of active projects; see below for some featured projects.

MovieLens is a web site that helps people find movies to watch. It has hundreds of thousands of registered users. We conduct online field experiments in MovieLens in the areas of automated content recommendation, recommendation interfaces, tagging-based recommenders and interfaces, member-maintained databases, and intelligent user interface design.

Find bike routes that match the way you ride. Share your cycling knowledge with the community. Cyclopath is a geowiki: an editable map where anyone can share notes about roads and trails, enter tags about special locations, and fix map problems – like missing trails. Hundreds of Twin Cities cyclists are already doing this, making Cyclopath the most comprehensive and up-to-date bicycle information resource in the world.

LensKit is an open source toolkit for building, researching, and studying recommender systems. Do you need a recommender for your next project? LensKit provides high-quality implementations of well-regarded collaborative filtering algorithms and is designed for integration into web applications and other similarly complex environments.

More About GroupLens

GroupLens is headed by faculty from the department of computer science and engineering at the University of Minnesota, and is home to a variety of students, staff, and visitors.